Redesigning RNNs for Fully Physics-Informed Loss Function

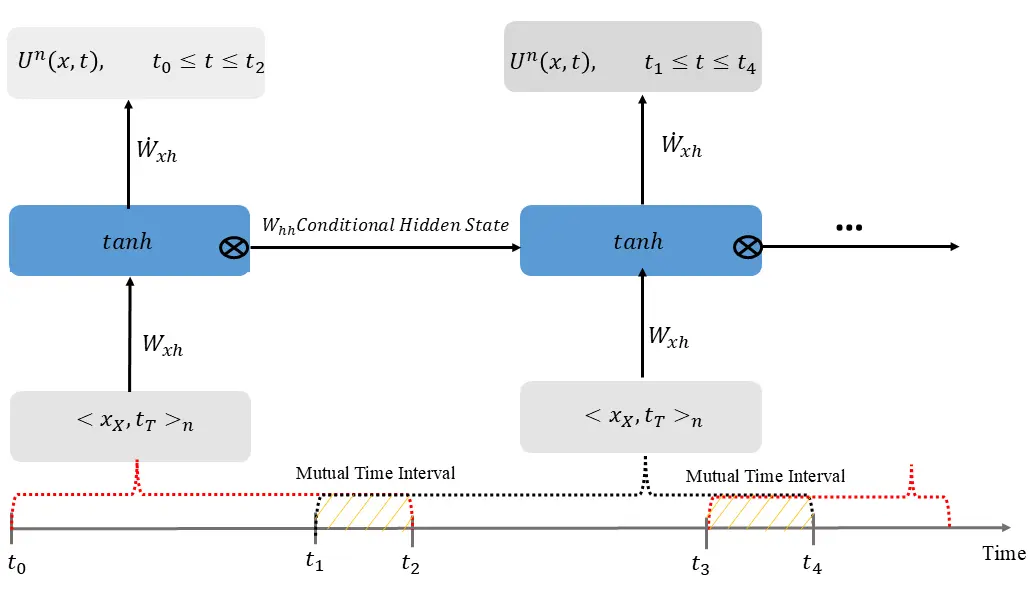

Training Recurrent Neural Networks (RNNs) solely through physics-informed loss functions presents significant challenges, necessitating numerical derivatives between blocks. In this work, we introduce structural modifications to RNNs that eliminate the dependency on numerical derivatives, allowing for the formation of purely physics-informed loss functions using backpropagation. The archived version of this paper is currently accessible, with the source code set for release upon official publication.